Thursday, May 29, 2014

The Media Lab Has Moved to Medium

Tuesday, December 31, 2013

MIT Media Lab Year-End Round-Up

Anthozoa

|

| Photo credit: Yoram Reshef for Stratasys |

CityScope

|

| Photo credit: Jonathan Williams |

Collaborative, Crowd-Sourced Symphonies

Led by Professor Tod Machover, the Opera of the Future group created new software tools to crowdsource music for collaborative music compositions in Toronto and Edinburgh.Toronto Symphony: Concerto for Composer and City

Tod Machover, Peter Torpey, Akito Van Troyer, and Ben Bloomberg used Hyperscore and the Social Computing group’s DOG programming language to crowdsource and compose a symphony about Toronto.

“Festival City” at the Edinburgh International Festival

|

| Photo source: http://www.eif.co.uk/blog/festival-city-project |

Akito von Troyer and Tod Machover created Constellation and Cauldron to gather and remix music

samples contributed by citizens and lovers of Edinburgh.

Director’s Fellows Program

E14 Fund

The E14 Fund is an independent investment fund that gives recent Media Lab alumni a “six-month runway” to entrepreneurship, in the form of startup support that includes a stipend, legal advice, meetings with venture capitalists, and more. The program also incorporates a way to put a portion of the profits from successful spinoffs back into MIT.

Ed Boyden Awarded the Brain Prize

|

| Photo credit: Dominick Reuter |

Focii

A new technique that converts an ordinary camera into a light-field camera, from Kshitij Marwah, Gordon Wetzstein, Yosuke Bando, and Professsor Ramesh Raskar of the Camera Culture group. Focii is a light-field camera attachment and software tool that can produce a full, 20-megapixel multi-perspective 3D image from a single exposure of a 20-megapixel sensor.

FreeD

A carving tool designed by postdoc Amit Zoran of the Responsive Environments group. FreeD allows the user to control the carving process while aided by a computer guidance system that is preprogrammed with the desired three-dimensional shape.

Immersion

inFORM

An interactive dynamic display table from Sean Follmer, Daniel Leithinger, and Professor Hiroshi Ishii of the Tangible Media group. inFORM is a Dynamic Shape Display that can render 3D content physically, so users can interact with digital information in a tangible way. inFORM can also interact with the physical world around it, for example moving objects on the table’s surface.

Joe Jacobson Wins the Exner Medal

|

| Photo credit: Shuguang Zhang |

MACH

An automated conversation coach that helps with interview skills, social interactions, and conversational skills from Ehsan Hoque of the Affective Computing group. MACH, or My Automated Conversation CoacH, is a software program that simulates face-to-face interactions in different social and professional contexts, and offers feedback to improve performance.

New Faculty: Kevin Slavin and Sputniko!

The Privacy Bounds of Human Mobility

Yves-Alexandre de Montjoye of the Human Dynamics group and Professor César Hidalgo of the Macro Connections Group used 15 months of data from 1.5 million people to show that 4 points—approximate places and times—are enough to identify 95 percent of individuals in a mobility database. These findings have been recently used to understand the use of metadata by the NSA and have been cited in numerous media reports and editorials. Their paper, “Unique in the Crowd: The Privacy Bounds of Human Mobility,” was published in Nature.

Science Fiction to Science Fabrication

|

| Photo credit: Guillermo Bernal |

Scratch 2.0

Seat-e

The Silk Pavilion

An exploration of the relationship between digital and biological fabrication from Professor Neri Oxman, Jorge Duro-Royo, Carlos Gonzalez, Markus Kayser, and Jared Laucks of the Mediated Matter group. The Silk Pavilion comprises a dome of CNC-deposited silk threads, onto which the researchers placed 6,500 silkworms at the bottom rim of the primary structure, spinning flat non-woven silk patches across the gaps in the dome. The silkworms were affected by spatial and environmental conditions such as the density of the existing silk threads and variation in temperature and sunlight; they migrated to darker and denser areas, creating a unique pattern of spun silk across the dome.

Smart Prosthetics

|

| Photo credit: Simon Bruty |

Smarter Objects

What We Watch

A tool to examine who's watching what, when, and where, all over the world, created by Ed Platt, Rahul Bhargava, and Ethan Zuckerman of the Center for Civic Media. What We Watch collects data from Youtube’s Trends Dashboard to determine what videos are popular in any of 61 countries at any given time; it then compares the video trends in one country to those in other countries.

Tuesday, November 12, 2013

Heads Up on Fellowships for Solid

This coming May 22-21, I'll be in San Francisco to co-chair Solid: Software / Hardware / Everywhere, a conference exploring what's next at the intersection of software and the physical world.

This is an inaugural conference sponsored by O'Reilly Media, and we're encouraging everyone from grad students, to artists, to managers, to top-level execs to come and engage in a multidisciplinary conversation about what's coming next.

To make sure that some of the best and the brightest (regardless of financial circumstances) are able to attend, we're offering ten Solid fellowships to any full-time student or independent innovator who is working on a project within the scope of Solid.

Each fellow will receive:

- $1,000 stipend

- A free pass to attend Solid

- Assistance with travel expenses to the conference

You can get more info and apply here; the deadline is January 15 (11:59 PST).

If you're interested in being a presenter, there's a December 9 deadline for sending in a proposal, which will be reviewed by a diverse programming committee. The process is open to anyone. And if your proposal is accepted, you will get a free pass to the entire event.

More to come as we get closer to the event.

Joi Ito is Director of the MIT Media Lab.

Thursday, October 3, 2013

Welcome New Students!

Perhaps the most important annual event at the Media Lab is the infusion of new talent each September. As a new semester gets underway, new students bring to this Lab a rich and vibrant set of experiences and ideas that renews our spirit of possibility. This year, we welcome one of the largest contingent of masters' students in the history of the Lab—47 bright individuals who will be embarking on a journey of unleashing their creativity and smarts during the next two years. We couldn't be more thrilled for them.

If you're a new student settling down at the Lab, initially you can feel a lot like a child in a candy shop at one end and also feel a teensy bit overwhelming at the other. A dazzling array of courses, spellbinding research across MIT, and an enthusiastic community on campus can be both endlessly interesting and daunting at the same time. But we have not a shred of doubt that you are going to do great things while you're here. It's sort of like a convex optimization formulation in machine learning—you are positioned to succeed by converging on your objectives. So, as you begin your journey here at MIT, we thought we'd share a few snippets with you.

- Reject the impostor syndrome | Your admission to the Media Lab was not by accident. You were selected from hundreds of competent and competitive folks. Please do not doubt your abilities. It can feel intimidating to be surrounded by extraordinary people, but never second guess your own abilities and talents.

- Focus on super health | Being a graduate student at MIT is a bit like being an athlete, where health and fitness are of paramount importance. Please triple down on positive habits that elevate your well-being. We want you to conquer the diathesis-stress model by optimizing healthy diet, exercise, and sleep. You'll find it only amplifies your creativity and the effectiveness of your research trajectory.

- Talk to the polymaths | The Media Lab is filled with faculty members who are not only pioneers in their own fields, but also have deep expertise in other fields. It is a function of our antidisciplinary approach to things. Some of our best experiences have been deep and astute discussions with our brilliant faculty.

- Spread your wings further | One of the most powerful aspects of being a Media Lab student is that there are very few required classes. You are free to take any class at MIT and can cross-register at Harvard as well. It's resulted in some long-lasting collaborations for many former and current students. If you are on the cusp of a spin-off, the world is at your command in terms of mobilizing resources here at MIT.

There are few places on this planet like MIT—from the invention of single-electron transistors to formulating information theory, the scientific acumen, gravitas, and impact that has emanated from this great campus still gives us goosebumps. We're all so lucky to be here. MIT's capacity to inspire and lead is perennial. Now you are a part of this family and every magical attribute of it, and we couldn't be more excited about you and the work that you will be crafting during your stay here.

Karthik Dinakar is a PhD student in the Media Lab's Software Agents and Affective Computing groups.

Birago Jones is the president of the MIT Media Lab Alumni Association

Joost Bonsen is a lecturer in the Media Lab's Human Dynamics group

Friday, August 16, 2013

Repertoire Remix: The Sounds of Edinburgh

Composer Tod Machover is creating a "collaborative symphony" called Festival City, to premiere on August 27 at the Edinburgh International Festival (EIF). The work is a sonic portrait of Edinburgh—city and festival—created with input from those who love the city. Machover has been soliciting audio samples of, and stories about, the city, as well as providing tools created by members of the Opera of the Future research group at the MIT Media Lab that will allow everyone to help shape the composition.

On Tuesday, July 9, 2013, Tod Machover and his team presented a one-time-only event to further shape Festival City. Participants in the live-stream event helped to select musical elements from the repertoire of pieces performed at the EIF since its inception in 1947, and pianist Tae Kim combined these repertoire fragments together in real time in constantly evolving ways, following input from online participants.

The simple web interface, Cauldron, showed a colored bubble for each composer selected; participants could "stir" with their mouse on the screen, and the closest "composer bubble" grew in size. At the same time, Machover was controlling a second interface to determine how the composer-fragments related to one another. Input from online participants and Machover immediately changed the size, appearance, and behavior of the bubbles, thus creating Kim's "score" for the improv session, which he followed and performed in real time.

Over 1,000 people participated in the hour-long Repertoire Remix project on The Guardian website. Aside from participants from Edinburgh and Cambridge/Boston, we were pleased to see participants from all over the UK: Aberdeen, Basingstoke, Bristol, Canterbury, Chester, Cornwall, Coventry, Inverness, Leeds, London, Macclesfield, Manchester, Newcastle, Norfolk, Norwich, Nottingham, Shropshire, Sussex, and Sheffield. People also joined from all over the world: Brazil; Toronto, Canada; Beijing, China; Paris, France; Stuttgart, Germany; Kinsale, Ireland; Amsterdam, The Netherlands; California, Indiana, and New York, USA; Thailand; and Turkey.

Monday, July 22, 2013

Google Glass and the Eyewitness/Journalist Gap

Chris Barrett, a PR professional who is one of the few thousands beta testers of Google Glass, posted a video on YouTube on July 4, announcing, "This video is proof that Google Glass will change citizen journalism forever." The video, which Barrett shot on the boardwalk in Wildwood, New Jersey, features the tail end of a fist fight and the arrest of two participants by local police. Discussing the video with Venture Beat, Barrett explained the benefit of Google Glass in filming these events: "I think if I had a bigger camera there, the kid would probably have punched me. But I was able to capture the action with Glass and I didn't have to hold up a cell phone and press record."

Despite Barrett's fear of being assaulted, I noticed that many of the other witnesses of the fight also decided to film events with their phones, and none were menaced by the police or the brawlers. Google Glass, capable of surreptitiously recording video, is less a revolution than an extension of an existing trend: we all carry cameras and, when something out of the ordinary happens, we record it.

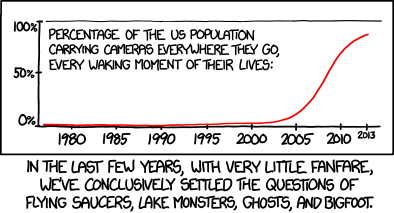

Randall Munroe, one of the Internet's most astute social commentators, has already thought through one of the key implications of pervasive cameras: if 10 million tourists with camera phones haven't photographed the Loch Ness Monster, we can probably finally declare it a myth. But the most thoughtful commentator on the implications of pervasive cameras is a man who's been wearing a camera for years: Steve Mann.

Dr. Steve Mann is a professor at the University of Toronto, an alum of the Media Lab, and a pioneer in the design of wearable computer systems. Since 1981, he has been building and wearing computer systems that can record what he sees, as well as superimpose computer-generated imagery on his field of vision. Mann has logged tens of thousands of hours wearing different generations of his EyeTap system, and has written eloquently about both how he uses the tool and how others react to him when he's wearing a camera.

Mann coined the term "sousveillance," watching from below, as an alternative to "surveillance," watching from above. When the UK government deploys 1.85 million cameras to monitor city streets, or the US government intercepts billions of communications, these powerful institutions send a message: consider yourself watched at all times and behave accordingly. Mann suggests that we might invert the paradigm by pointing the camera at institutions and authorities, reminding them that citizens are watching as well.

Compared to the power of monitoring all digital communications in a nation, the ability to film police making an arrest initially seems like weak tea. (Even asserting rights to this form of sousveillance has required protracted legal battles, and commentator Steve Silverman strongly discourages people from recording police surreptitiously, as Barrett did.) But protesters in the Occupy movement took to live-streaming video from their encampments, both to share proceedings with people who couldn't be physically present, and to ensure that any police violence would be documented and broadcast. (Charlie DeTar, a doctoral student at the Media Lab, produced a map visualizing these streams.) The firing of Lt. John Pike, the infamous "pepper-spray cop" who assaulted students at UC Davis, suggests that this documentation of abuse was effective.

Sousveillance has also become a routine part of election monitoring in much of the world. Enabled by technologies like Ushahidi, which allows thousands of people to report on an event and place their reports on an interactive map, citizen monitoring of elections by photographing long lines at polling places or voting irregularities has become common practice in much of the world.

When Barrett declares a revolution in citizen journalism due to Google Glass, he's thinking of this sense of sousveillance. With widespread use of Google Glass, it's more likely that abuses of power will be documented, as well as natural disasters and breaking news. But Barrett's video shows that there's a giant gap between an eyewitness account and a journalistic story. Yes, Barrett's video shows an arrest, but it doesn't help us figure out who was fighting and why, or what happened to the men after they were arrested. Pervasive cameras create more inputs for journalism, but they don't automatically answer the questions of who, what, where, and why that we expect of good journalism.

When Mann discusses sousveillance, he often talks about another facet of the idea: by wearing a camera and pointing it at people, he forces people to think about the implications of being watched. People often react badly to Mann's sousveillance, complaining that his camera is an invasion of their privacy. Mann hopes that this discomfort at being watched will inspire deeper consideration of the ways people are watched by corporations and governments.

Watching Barrett's video, it's easy to feel uncomfortable: for the people watching in the crowd who now feature in his film, and for the participants in the fight who've now been viewed by almost a million people online. Perhaps that discomfort will translate into a discussion of societal norms around cameras and lead to a legal or societal agreement that we don't record people without their permission—except in extraordinary circumstances.

There's another possibility. If Google Glass and other wearable cameras become pervasive, the uncomfortable and provocative experience of being watched eventually disappears and we simply get used to the idea that public spaces are spaces in which we expect to be filmed. Before we accede to that possibility, it's worth having an extended conversation about the balance between the power we gain from sousveillance and the constraints that surveillance puts on our behavior.

Ethan Zuckerman is director of the MIT Center for Civic Media.

Tuesday, June 18, 2013

Welcome to New Faculty Member Hiromi Ozaki!

Hiromi Ozaki, also known as Sputniko!, has joined the Media Lab as an assistant professor. An artist who uses design to explore technology's impact on everyday life–and to imagine the future–Ozaki’s art practice includes creating songs and music videos about products she has designed, which she posts on social networks and online video platforms to encourage discussion outside traditional academic spheres.

"Hiromi Ozaki's art provokes people to think about the social, cultural, and ethical implications of new technologies. We're looking forward to seeing what happens when she brings her great energy, imagination, and creativity to the Media Lab," says Professor Mitchel Resnick, head of the Media Lab's academic Program in Media Arts and Sciences.

Ozaki's cross-boundary work is emblematic of the Media Lab's antidisciplinary, collaborative environment, and her research at the Lab will explore storytelling and embrace the social, cultural, and ethical implications of technology created at the Lab. She envisions her research at the Media Lab as deeply symbiotic and collaborative with other Lab research groups, with the aim of stimulating and developing new technologies that will, in turn, encourage discussions both within and outside the MIT Media Lab, as well as to inspire and shape future Lab innovations.

"The key thing that excites me about Hiromi's work is that she elegantly synthesizes science, computation, networking, art, and design into beautiful "hacks" very appropriate to the contemporary medium of the Internet–social media, video, music and social commentary," says Media Lab director Joi Ito. "She's a true artist and producer that will connect to the Media Lab at many of its levels."

Hiromi Ozaki has presented her film and installation works at exhibitions such as Talk to Me (MoMA, New York, 2011), Transformation (Museum of Contemporary Art Tokyo, 2010), Light of Silence (Aomori Museum of Art, 2012), and the Third Art & Science International Exhibition in Beijing (2012). She has received the “Passion without Borders” Award from the Japan National Policy Unit's Cabinet Secretariat (2012), an honorary mention in both Ars Electronica's Hybrid Art Category (2013) and Interactive Art Category (2012), and Jury Recommended Works at the 14th Japan Media Arts Festival (2011). Recognized as an active social media influencer in Japan, the Japanese Ministry of Economy, Trade and Industry (METI) selected Ozaki as the youngest member of the Cool Japan Advisory Council (2012-2013). She received her MA in design interactions at the Royal College of Art, London. Prior to studying at the RCA, she received her BSc in mathematics and computer science at Imperial College, University of London.